We’re seeing the next generation of security debt unfolding before our eyes (and why it’s not too late to stop it)

Posted: Thursday April 2, 2026

Author: Jason Garbis

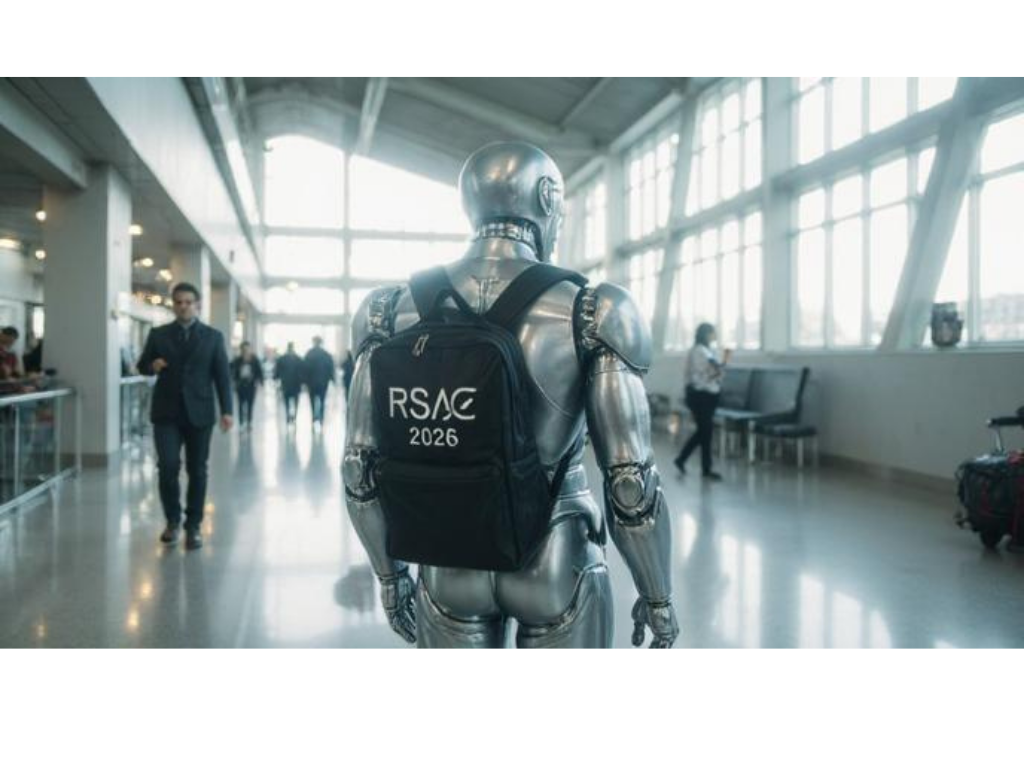

Last week was RSA Conference 2026, the largest annual gathering of information security professionals. With 44,000 attendees and over 600 vendors present, there was no shortage of literal and metaphorical noise to try to make sense of. To that end, I’d like to share a few thoughts and key takeaways from the conference. (And if you’d like an informal play-by-play recap, you can find my series of short video commentaries here)

AI as an inflection point

AI was clearly on everyone’s mind, and not only due to the plethora of show-floor vendor messaging around this. In particular, Security for AI systems was probably the main topic of conversation and the focus of many of the session presentations, closely followed by the “flip side” topic of applying AI to improve security.

But I want to drill down into that first topic, Securing AI Systems, because it’s clear to me that the current moment is one of those crucial times for information security leaders at enterprises: With the extremely rapid deployment of agentic AI, we run the risk of literally seeing the next generation of security debt unfolding before our eyes. Specifically, an unmanaged and unconstrained agentic AI system deployed by the business needs to be viewed as a clear and present danger. This could take the form of an agentic AI system using an MCP server to access corporate data or systems, or a local OpenClaw agent running on a user’s device (naturally, granted full read and write access). And this is happening on a daily basis in many enterprises.

The adoption of AI has been extraordinarily rapid, and not just within typical early adopter industries: as the head of Google Cloud Security Services stated in an RSAC panel session, they’re seeing significantly faster adoption even within highly regulated environments compared to previous technology waves.

The bottom line is that CISOs need to invest the time and energy to ensure that they and their teams are not just conversant, but are fluent in AI. Usage of AI is an unstoppable force in the enterprise, and as security leaders, we have to take the initiative to be able to securely enable business usage of AI.

Information Security’s Mission: Prevent the Bad and Enable the Good

I often talk about why with Zero Trust, it’s important for security teams to position themselves as not just we prevent bad things from happening, but also as the group that securely enables good things to happen. And agentic AI adoption is the best and most current example of a business imperative that absolutely needs to be supported and even encouraged, but only through the use of approved templates.

As a security team, your immediate priority needs to be to define, communicate, and enforce usage of these so-called golden paths – curated, documented, and streamlined templates, toolkits, and design patterns, with automated deployment and support.

Chances are your organization already has AI systems and agents deployed that you’ll need to work to obtain visibility into. Think of this as your team’s learning journey: agentic AI is such a new area that literally everyone in the industry is also very much in the early stages of learning. And yet, we need to remember that our foundational best practices are still sound, and still very much apply to AI. If you don’t already have one, establish an AI Center of Excellence for your organization. If one exists, insist that your security team have a prominent role.

Back to the Basics: (for now)

If we think about the set of problems and risks that agentic AI brings to the enterprise, it’s clear that there are a bunch of unsolved problems, which I’ll touch on in a moment. But it’s important to recognize that of the challenges that AI deployment brings to the enterprise, 80% are solved problems. Specifically, I’m talking about basic best practices of information security, such as identity management, authentication, workload identification, deployment automation and lifecycle management, and visibility. We know how to solve every one of these in enterprise environments through the application of mature technologies and defined and enforced processes.

Now, I’m a realist – I work with enterprises every day helping them assess their maturity and create roadmaps to improve security outcomes. I see the messy, immature, and historical underinvestments in security that plague many enterprises. The reality is that many enterprises are not as effective as they want and need to be in applying basic security best practices. So use AI adoption as a catalyst to improve this. Even if you just start out with smaller pockets of enforcement, it’s far better than the alternative.

Push the rock downhill, not uphill

One subtle but important part of our Zero Trust Blueprint approach is that we work with clients to identify current and future business drivers, priorities, and investments, in order to use them to overcome resistance and accelerate adoption of security improvements. Our guidance is to have the security teams learn enough about the business to be able to have meaningful “what would it mean to you if…” conversations with non-security peers and stakeholders.

Compare the response you’ll get to “We’re rolling out new security changes to how you login. Mandatory training is next week, everyone needs to sign up”, with “You know that annoying login re-prompt you keep getting with the VPN? We’re making that completely disappear. We only have room for 1 more department in our pilot for next week, are you interested?” Same technology and same security change, but a world of difference in the reaction.

This same approach should apply to AI. You want to be able to approach people within your organization with a message such as “Hey, we’re excited that you’re going to be applying AI to X. That’s really going to help you achieve Y and Z. We’ve created a simple and automated way for you and your team to get started quickly and easily, using proven tools and templates. This will help you accelerate your project.”

What about the 20% of unsolved problems?

While security fundamentals will close the gap for the majority of Agentic AI security issues, it’s definitely the case that we have a number of unsolved problems in the industry. Specifically, we have no standard or well-understood way to model and delegate authorization between users and agents, and among agents. There is a lot of work ongoing here, and I’m looking forward to seeing how vendors, enterprises, and standards organizations collaborate to solve this. I’ll be diving into this in more depth in upcoming blog posts, as I continue to research, and work to coalesce my thoughts into coherent ideas.

April 5, 2027

RSA Conference 2027 begins on April 5 of that year. Let’s make sure that the next time all 44,000 of us get together, we have some success stories to share about how we securely enabled our enterprise’s usage of AI, and what we learned along the way.

Other Voices

I also recommend you take a look at the RSAC recap blog posting from Art Poghosyan, founder and CEO at Britive. His thoughts on the connections between managing agentic AI, data, and dynamic Zero Trust access controls are worth reading:

And, while it’s not directly tied to RSAC, Token Security’s Agentic Pulse framework offers a useful taxonomy of agents, and compares the Access vs. Autonomy across the three categories: Agentic Chatbots, Local Agents, and Production Agents.

Zero Trust Readiness

Want to evaluate your enterprise’s Zero Trust Readiness?

Take our free, 3-question self-assessment here and get your customized report in minutes.